January 10, 2024

The company is the only B2C e-commerce platform built for the African mass market. They have 2 million customers who have placed over 13 million orders on their platform. Through a combination of digital channels and 50,000 agents across Kenya, the company serves over 800 million middle to low income consumers.

THE CHALLENGE

African consumers buy around 70% of their personal care products, food, and beverages from over 2.5 million small retail outlets. Compared to these traditional retail models, the company offers its customers the ability to place orders through an agent’s catalogue or on the company’s website.

As the company grew, their business users required different Machine Learning (ML) models for their business units. The Finance team needed various pricing models to cater to their price sensitive customer base and manage the competition from other retailers. The Marketing team required Segmentation, Targeting and Positioning (STP) models and event planning models. The Operations team needed demand forecasting models to manage their core operations of picking, packing, sorting and transportation. Based on these models, the Operations teams could identify the number of trucks required, the Stock Keeping Unit (SKU) level forecasting, optimized Minimum Order Quantity (MOQ) and the manpower required in each location etc. Our company developed and delivered these ML models.

As each business unit built their own models, the company’s management team realized that the data availability was still siloed. Divisions did not have immediate access to the ML models from other teams. Additionally, each business unit developed and managed their models differently. As a result, the observability and output of these models was disjointed. This led to the IT teams spending a lot of time on model related maintenance activities.

The company needed an IT partner to enable an enterprise Machine Learning Operations (MLOps) solution. This would first involve creating a centralized data repository for cross functional visibility. Then, for the existing ML models, the partner would have to define a centralized code check in process, and automate the maintenance, monitoring, and observability of the different models for sustained accuracy and drift.

THE SOLUTION

The team of architects and data engineers from Prescience Decision Solutions, a Movate company understood that one of the core challenges was that the data being fed into the ML models was not standardized. To provide a centralized data repository, the team designed and developed an Enterprise Data Warehouse (EDW). The existing ML models were reconfigured to extract data from this new EDW. The output of these ML models was provided to different stakeholders through Business Intelligence (BI) dashboards built on Tableau.

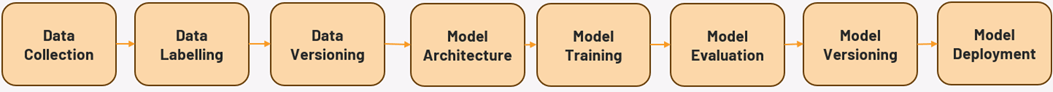

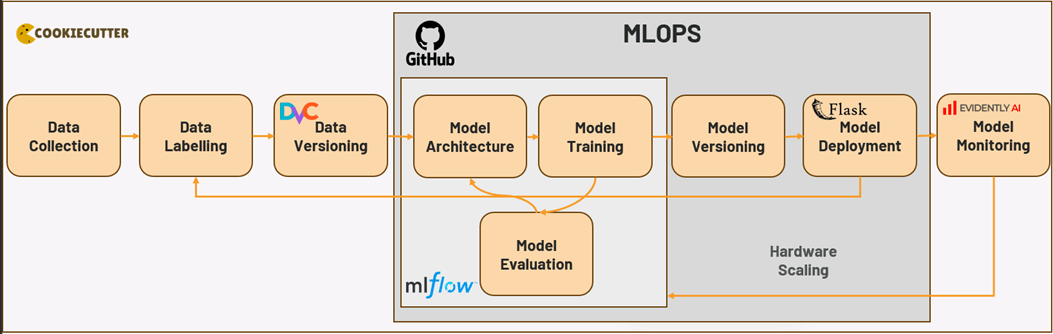

As part of the MLOPS enablement, the team established a unified project structure for all the identified ML use cases. They centralized the management of notebooks using Git. All the datasets were tracked after Data Version Control (DVC) was implemented. The team enabled an in-house feature store for faster deployment of all ML models. The orchestration of the workflows was managed through Github Actions. The team centralized the tracking of ML models and developed retraining strategies using open-source tools. All of these resulted in faster approval from the company stakeholders due to reduced time for making decisions.

The ML models were managed in MLflow. Based on the performance of each model, they were moved from the staging layer to User Acceptance Testing (UAT) step and finally, production environment, through MLflow. Once the model was deployed, it was made available to the EDW and BI reports using Flask. For model observability, Evidently AI was used. This helped users to track each model, understand which models were performing well, set thresholds for different Key Performance Indicators (KPIs) and plan for retraining the model.

The different technologies used for this engagement included,

- Git and Git-Actions

- CookieCutter

- MLFLOW

- Flask

- Evidently-AI

- DVC (Data Version Control) and

- AWS.

THE IMPACT

With the shift to MLOPS, the business users benefitted from having quicker and easier access tothe ML model reports. The company was able to track the ML model accuracy and the corresponding business impact, as well. The entire ML model deployment and maintenance process was automated, which also directly optimized the deployment of future models.